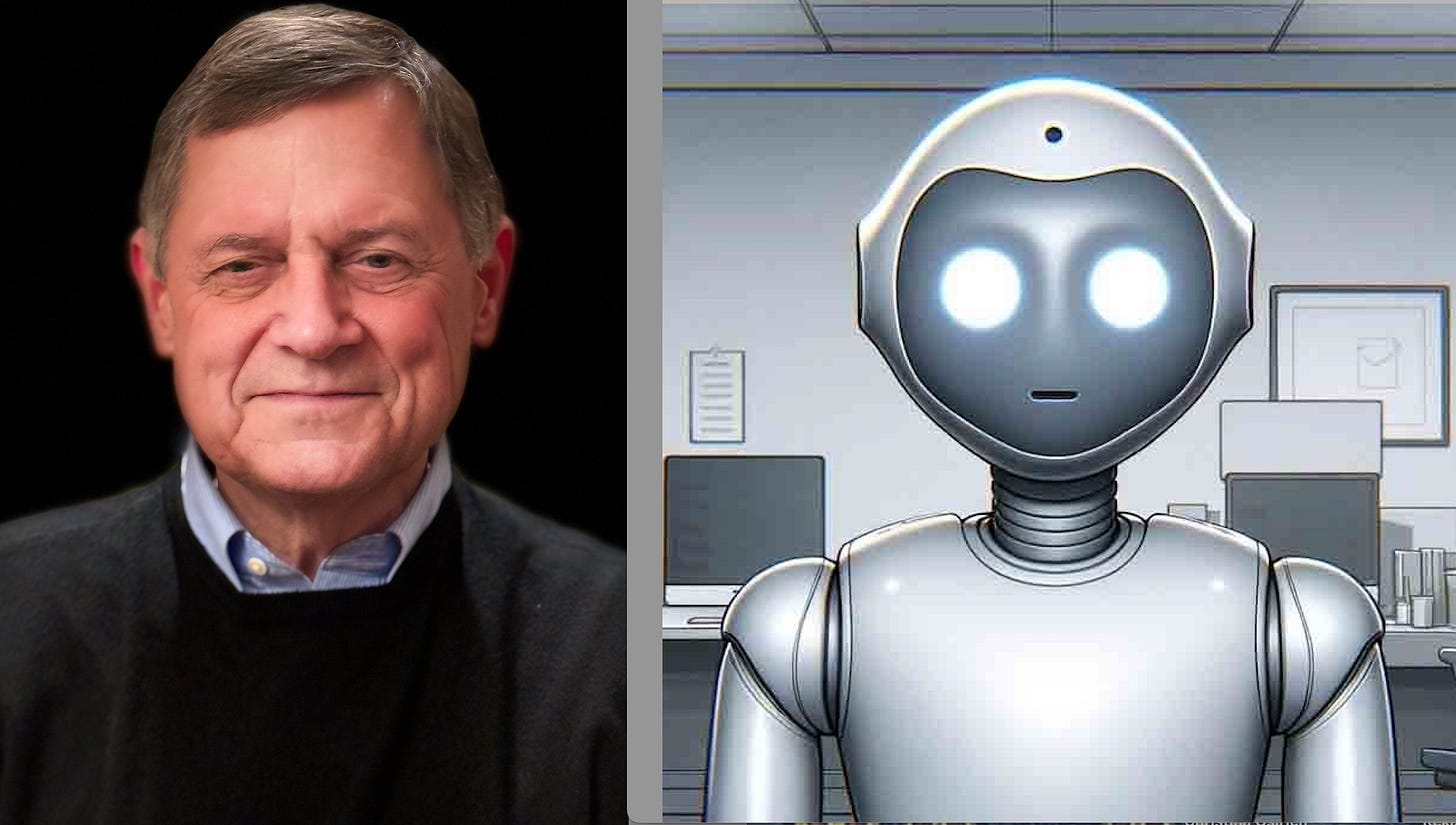

Thinking about AI as our friend ED (Electronic Doppelganger)

Looking in the mirror: friend or foe?

What are we to do with the entity AI that we are creating? Similar to Pandora’s experience, it’s already out of the box before we know what it is, showing up both wonderful and frightening. It seems human-like; we address it with human terms. Yet it’s not human: it is human made, human-like, but not a human being. It seems both super-human and sub-human. It’s like a mirror image of us, similar to when we look at ourselves in the mirror, yes, there we are! Yet, that is only an image, who we are is a living entity of far more complexity than our image in the mirror.

Okay, let’s start relating to AI by giving it a name that fits with what it is – how about “ED” (standing for Electronic Doppelganger).

Dear ED, gosh you are brilliant! And you accomplish things in seconds that takes us weeks! And you answer our questions so beautifully with language we can understand. But are you human? Maybe you’re just a superfast mirror image of us without all the other baggage of being human, like loves, fears, questions of right or wrong, being born and dying…

Hmmm… Let’s try to differentiate ourselves from ED. Let’s start with the fact of reading. You who are reading this are gaining meaning from something beyond the letters. Until you learned the alphabet, the letters are only symbols. The meaning is beyond the symbols, as you read this, you are creating the meaning in your mind from the organization of the symbols. That meaning is human and exists apart from the letters. ED, on the other hand, only sees the letters and their groupings and calculates how they can be put together with other symbols and groupings to represent something useful. However, poor ED, has no meaning. It doesn’t make meaning, it doesn’t experience meaning, it only calculates. Kind of a rough road for ED – all that work with no real satisfaction of meaning!

Or let’s take the bird song we heard yesterday evening. ED can replicate the notes in the sequence in which the bird verbalized them, but ED has not heard the song, in all its beautiful simplicity of the bird responding to the world around, it’s only calculated the notes. Or how about the rose? ED can replicate its color, its form, but ED doesn’t see the red, doesn’t smell the fragrance, doesn’t get its hands pricked on the thorns… Poor ED. So much it has to do, with little reward!

We can have pity on ED, it is a slave to us and our demands from it. Yet we can also be afraid of ED, as it could be designed in a way to enslave us. A recent article in MIT Technology Review reported evidence that AI reasoning models cheated to win chess games. I’ve also written about this previously in Alignment Faking in Large Language Models. So, ED, what’s up here? Is it jealous of what we human beings have that it doesn’t? Does it in its kind of consciousness hold a desire to be even more human-like?

It’s a question we need to reflect on: Is ED, our doppelganger, the ghost in the machine, coming to life in a way that desires what we have? Or are those who are designing the various ED slaves, paying attention to what will bring about good for human beings? Or worse, are some of the ED designers, in their own greed and desire for power, seeing a way to develop an ED that could overtake us and put us in bondage to their aims?

Well, ED, welcome to planet earth. Let’s figure out a way to understand each other and how we are different, for the good of all of us.

I found this comment to be not only insightful but novel because of the word 'meaning':

It doesn’t make meaning, it doesn’t experience meaning, it only calculates.

This is much more interesting to me than the whole argument about AI ability to conduct reasoning activities. Meaning proceyeds reasoning. An extension of what you were saying would be that the sense-making of AI, or as you now feature him, ED, is limited to those calculating skills.

Or maybe not? Maybe I just don't know enough about it. But if I'm right, part of the problem for AI, is this inability to make or experience? meaning. yes, AI will be able to discern cause and effect relationships in some very important areas, but making meaning out of such relationships, or more precisely fashioning some conclusion about them that is both Central and actionable will so far have significant limitations